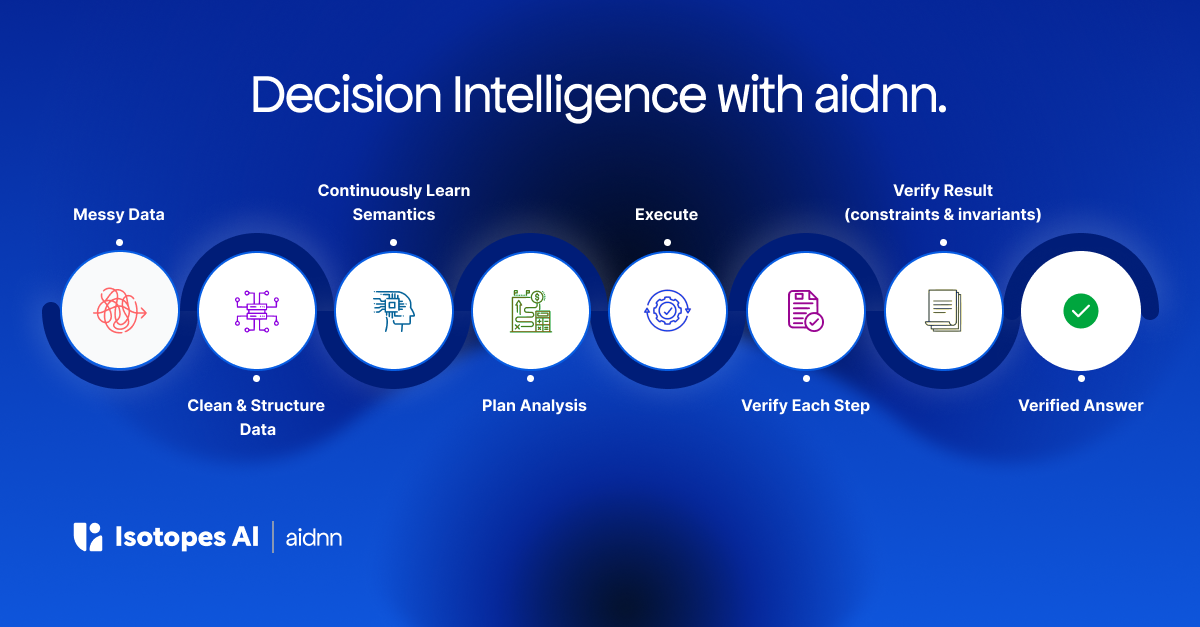

🚀 Just Launched: Get the recipe to go from messy data to verified analytics in minutes with aidnn. Check out our blog to learn more.

April 13, 2026

Every company has an analyst who knows where the bodies are buried. They know which tables are trustworthy and which silently produce duplicates. They know that "revenue" means ARR to finance and GMV to the product team. They know that the Stripe data lags the warehouse by 48 hours, that the partner feed uses a different fiscal calendar, and that customer IDs don't match across systems without a specific mapping table nobody documented.

This knowledge took months to build. It's among the most valuable analytical assets the organization has. And it is completely absent from every AI analytics tool on the market.

Every chat-with-data tool starts from scratch every session. You explain the context. You correct the output. You steer it toward the right interpretation. The next day, you do it again. The tool learned nothing.

At least Sammy Jankins had the tattoos…

At best, you can bootstrap it with some metadata and dbt model descriptions. But that captures schema structure, not business meaning. It tells the system that a column called arr_monthly exists. It doesn't tell the system that your team stopped trusting that column six months ago because a migration introduced rounding errors, or that the correct source is now a manually maintained spreadsheet that your FP&A lead updates every Thursday.

The knowledge that makes analytics trustworthy is not schema documentation. It's operational intelligence: how the organization actually thinks about its data, which sources are authoritative for which questions, what reconciliation approaches produce consistent results, and what changed last quarter that invalidated the old assumptions.

This knowledge lives in people's heads. When they leave, it leaves with them. When they're on vacation, the analysis waits. When a new analyst joins, they spend months rebuilding it through trial and error.

No AI tool captures it. No AI tool learns from it. No AI tool compounds it.

Learning isn't a prompt. It's an architecture.

A system that truly learns an organization's analytical knowledge needs to solve four hard problems: what to capture, how to rank what it knows, how to resolve conflicts, and how to forget what's no longer true.

What to capture. Organizational knowledge comes from sources that look nothing alike: schema metadata, dbt transformation logic, uploaded policy documents, analyst corrections during live sessions, admin-authored business rules, metric definitions that live in email threads. Each has different granularity, reliability, and shelf life. A self-learning system can't just dump all of this into a single context window. It needs to structure it so that every piece of knowledge is individually retrievable and individually citable.

How to rank it. Not all knowledge is equal. A metric definition that an admin explicitly authored is more authoritative than a pattern the system inferred from usage. A fact validated through mathematical verification is more trustworthy than one extracted from an uploaded PDF. A self-learning system needs a clear authority hierarchy so that when sources conflict, the system knows which one to trust.

How to resolve conflicts. Conflicts are inevitable. One source says fiscal year starts in January. Another says April. A system without conflict detection picks arbitrarily and nobody knows. A self-learning system needs to detect contradictions and surface them rather than silently choosing.

How to forget. This is the one nobody talks about. Business rules change. Metric definitions evolve. Entity mappings get updated. A system that only learns creates stale knowledge lock-in, where outdated patterns confidently produce wrong answers. A self-learning system needs to unlearn, correcting itself as the business changes without destroying the audit trail of what it used to know.

aidnn's knowledge architecture is designed around these four problems.

From the first connection, the system bootstraps from everything the organization has already codified: dbt models, metadata, schema documentation, existing pipeline code, dashboards, uploaded reference documents. It doesn't treat these as a flat context dump. It decomposes them into structured, individually citable units of knowledge, each carrying provenance and a trust ranking. When the system retrieves knowledge to plan or verify an analysis, it reasons about what's relevant and how much to trust it.

Then, with every interaction, the knowledge compounds through three channels.

Plan feedback captures how the organization thinks about its data. When an analyst corrects an assumption, specifies which source is authoritative, or steers the system toward a different approach, that preference becomes persistent organizational knowledge that influences future analyses.

Result ratings capture quality standards specific to the organization. When a team rates an output, that judgment encodes what "good" looks like for this business: not generic accuracy, but this organization's specific expectations.

Verification outcomes capture mathematically validated analytical patterns. When aidnn's verification agents test constraints against a completed analysis and the constraints hold, the system records which approaches produce consistent results for this customer's data. Proven ground truth, not heuristic, not opinion.

Over time, patterns that appear repeatedly are automatically consolidated and promoted. Candidate business rules are surfaced for admin review. No automatic promotion to the highest trust tiers ever happens without a human in the loop.

When conflicts between knowledge sources are detected, they're surfaced with full evidence rather than silently resolved. And when things change, the system adapts. User corrections supersede old knowledge. Outdated entries are deprecated, never silently deleted, so the audit trail remains intact. Accumulated intelligence doesn't become accumulated liability.

Six months in, aidnn knows your business. It knows which sources are authoritative for which questions. It knows how your team defines its key metrics, and how those definitions differ from department to department. It knows which reconciliation approaches produce consistent results for your specific data landscape. It knows what changed last quarter and has already adapted.

This isn't configuration you could hand to another tool. It's institutional intelligence that was earned through hundreds of interactions, corrections, validations, and verified analyses. It's the knowledge your best analyst carries in their head, except it doesn't leave when they do, it doesn't take a vacation, and it gets sharper every week.

Every new tool your team evaluates will start from zero. aidnn won't. That gap widens every quarter. The system that knows your business will always outperform the one that's still learning it.

The architecture is the window. The flywheel is the moat. And the moat belongs to you.